|

Yixuan Huang Hello, my name is Yixuan Huang. I am currently a Postdoctoral Research Associate at Princeton University, working with Prof. Tom Silver. I completed my Ph.D. in the Kahlert School of Computing at the University of Utah, where I was advised by Prof. Tucker Hermans. During my Ph.D., I was a Visiting Student Researcher (VSR) at Stanford University, working with Prof. Jeannette Bohg. I got my bachelor's degree in Computer Science and Engineering from Northeastern University in 2020. During my undergraduate years, I worked with Prof. Sicun Gao at UC San Diego. Here is my Research Statement. |

|

|

|

-

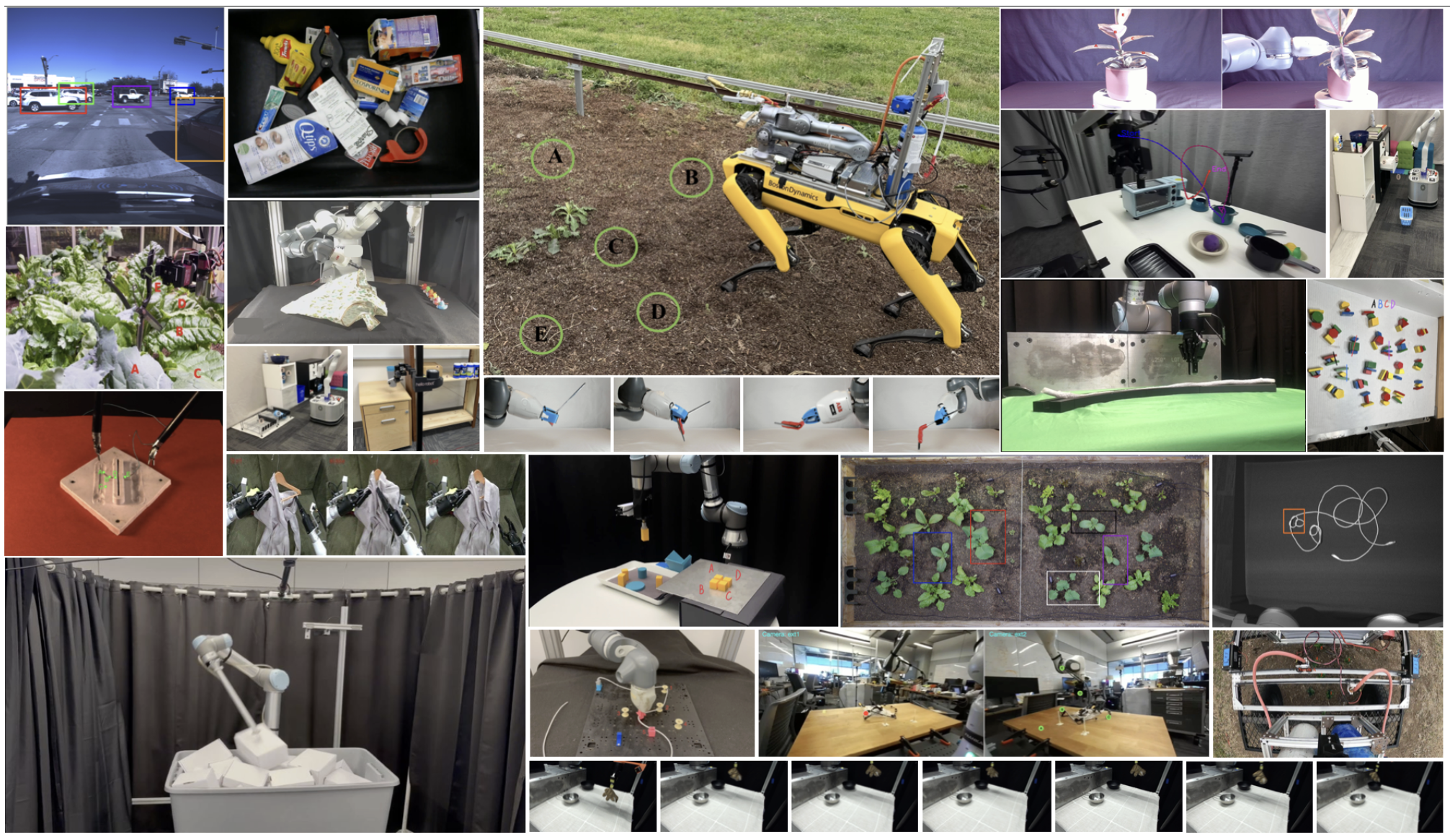

My research aims to develop general-purpose robotic systems capable of structured and adaptive physical reasoning.

Robots that interact with the physical world must reason about the kinematic and dynamic constraints imposed by their embodiment, their environment, and the task at hand.

These often-entangled constraints can turn semantically simple tasks into challenging puzzles.

To address these challenges, my research develops algorithmic frameworks that integrate perception, reasoning, learning, and planning within structured representations.

Concretely, I have developed:

- Methods for learning models that enable hierarchical planning directly from partial-view point clouds (RD-GNN, Points2Plans).

- Systems that detect failures, recover from them, and leverage them to reduce future errors over time (Fail2Progress).

- A benchmark that systematically studies robot physical reasoning across 25 environments (KinDER).

|

|

|

|

|

|

Yixuan Huang*,

Bowen Li*,

Vaibhav Saxena*,

Yichao Liang,

Utkarsh Aashu Mishra,

Liang Ji,

Lihan Zha,

Jimmy Wu,

Nishanth Kumar,

Sebastian Schere,

Danfei Xu,

Tom Silver

Robotics: Science and Systems (RSS), 2026

project page /

arXiv /

code

Shuangyu Xie,

Kaiyuan Chen,

Ziyang Chen,

Simeon Adebola,

Yixuan Huang,

Zehan Ma,

Tianshuang Qiu,

Wentao Yuan,

Dhruv Shah,

Pannag R Sanketi,

Ken Goldberg

Robotics: Science and Systems (RSS), 2026

project page (coming soon) /

arXiv (coming soon)

Lihan Zha,

Asher James Hancock,

Mingtong Zhang,

Tenny Yin,

Yixuan Huang,

Dhruv Shah,

Allen Z. Ren,

Anirudha Majumdar

Robotics: Science and Systems (RSS), 2026

project page /

arXiv /

code

Mingen Li,

Houjian Yu,

Yixuan Huang,

Youngjin Hong,

Changhyun Choi

IEEE International Conference on Robotics and Automation (ICRA), 2026

(Best Paper Award on Robot Learning -- Finalist)

project page /

arXiv

Yixuan Huang,

Novella Alvina,

Mohanraj Devendran Shanthi,

Tucker Hermans

Conference on Robot Learning (CoRL), 2025

project page /

arXiv

Yixuan Huang,

Christopher Agia,

Jimmy Wu,

Tucker Hermans,

Jeannette Bohg

IEEE International Conference on Robotics and Automation (ICRA), 2025

project page /

arXiv /

code

Yixuan Huang,

Nichols Crawford Taylor,

Adam Conkey,

Weiyu Liu,

Tucker Hermans

IEEE Transactions on Robotics (T-RO), 2024

project page /

arXiv /

code

Yixuan Huang,

Jialin Yuan,

Chanho Kim,

Pupul Pradhan,

Bryan Chen,

Li Fuxin,

Tucker Hermans

IEEE International Conference on Robotics and Automation (ICRA), 2024

project page /

arXiv

Yixuan Huang,

Adam Conkey,

Tucker Hermans

IEEE International Conference on Robotics and Automation (ICRA), 2023

project page /

arXiv /

code

Yixuan Huang,

Michael Bentley,

Tucker Hermans,

Alan Kuntz

International Symposium on Medical Robotics (ISMR), 2021

(Best Paper Award -- Finalist, Best Student Paper Award -- Finalist)

project page /

arXiv

|

Undergraduate research project: This project focused on addressing safe reinforcement learning problems. Our goal was to design RL algorithms that maximize cumulative rewards over time while avoiding collisions. I began with a classic racecar example and created a simulation environment using PyBullet. To achieve our goal, we designed two sub-policies to address the objective separately and employed an additional factored policy to select between the sub-policies. Our final evaluation demonstrated that we achieved near-zero violations with low sample complexity during the testing benchmarks. |

|

|

Undergraduate research project: In this project, we used a drone to fly around an object and automatically capture a set number of photos for a 3D reconstruction task. We combined a reinforcement learning algorithm with state estimation to find the optimal drone trajectory for achieving high-quality 3D reconstruction. |

|

|

Ph.D. first-year rotation project: In this work, we take steps toward developing a system capable of learning to perform context-dependent surgical tasks by learning directly from expert demonstrations. To achieve this, we present and evaluate three approaches for generating context variables from the robot's environment. The environment is represented by partial-view point clouds, with approaches ranging from fully human-specified to fully automated. |

|

|

During the middle of my Ph.D., I worked with the KUKA iiwa robot equipped with a 3-fingered underactuated hand with built-in TakkTile pressure sensors. I designed various manipulation primitives (e.g., grasp, place, dump, push) and executed them on the KUKA iiwa robot. I published three papers (RD-GNN, eRDTransformer, and DOOM and LOOM) with this robot. |

|

|

During my visit to Stanford University, I worked with a customized holonomic mobile base combined with a kinova arm. This robot is capable of navigating, grasping, placing, pushing, pulling, and even tossing! Using these primitives, the robot can even feed you snacks (e.g., an apple). I completed a project (Points2Plans) with this impressive robot. |

|

|

For the final project of my Ph.D., I am working with a Stretch robot. I enjoy working with this lightweight yet capable robot. I will design multiple primitives for the Stretch and focus on my final project, which involves reasoning about failure cases. |

- Reviewing:

- International Conference on Robotics and Automation (ICRA) (2023 - Present)

- Conference on Robot Learning (CoRL) (2023 - Present)

- Robotics: Science and Systems (RSS) (2024 - Present)

- International Conference on Intelligent Robots and Systems (IROS) (2024 - Present)

- Robotics and Automation Letters (RA-L) (2024 - Present)

- IEEE Transactions on Robotics (T-RO) (2025 - Present)

- The International Journal of Robotics Research (IJRR) (2026 - Present)

- International Conference on Learning Representations (ICLR) (2025 - Present)

- Neural Information Processing Systems (NeurIPS) (2026 - Present)

- IEEE Transactions on Artificial Intelligence (TAI) (2024)

- IEEE Transactions on Instrumentation and Measurement (TIM) (2024)

- Workshop on Learning Effective Abstractions for Planning (LEAP @ CoRL) (2024, 2025)

- Fall 2022: Teaching Assistant CS 4300: Artificial Intelligence University of Utah

- Spring 2022: Teaching Assistant CS 4300: Artificial Intelligence University of Utah

- Summer 2022: Robotics lab tour co-organizer, University of Utah Bridge Program

- Summer 2023: Robotics lab tour co-organizer, University of Utah Bridge Program

- International Conference on Robotics and Automation Best Paper Award on Robot Learning Finalist (2026)

- International Symposium on Medical Robotics Best Paper Award Finalist (2021)

- International Symposium on Medical Robotics Best Student Paper Award Finalist (2021)

- National Scholarship by Ministry of Education of China (2017)

- National Scholarship by Ministry of Education of China (2018)

|

Website source from Chris Agia |